The landscape of product development has fundamentally changed. Traditional minimum viable products that once took 6-12 months to validate are now being outpaced by intelligent systems that learn and improve autonomously. In 2026, AI MVP development gives startups a key advantage. It helps them test ideas quickly, cut costs, and provide personalized experiences from the start.

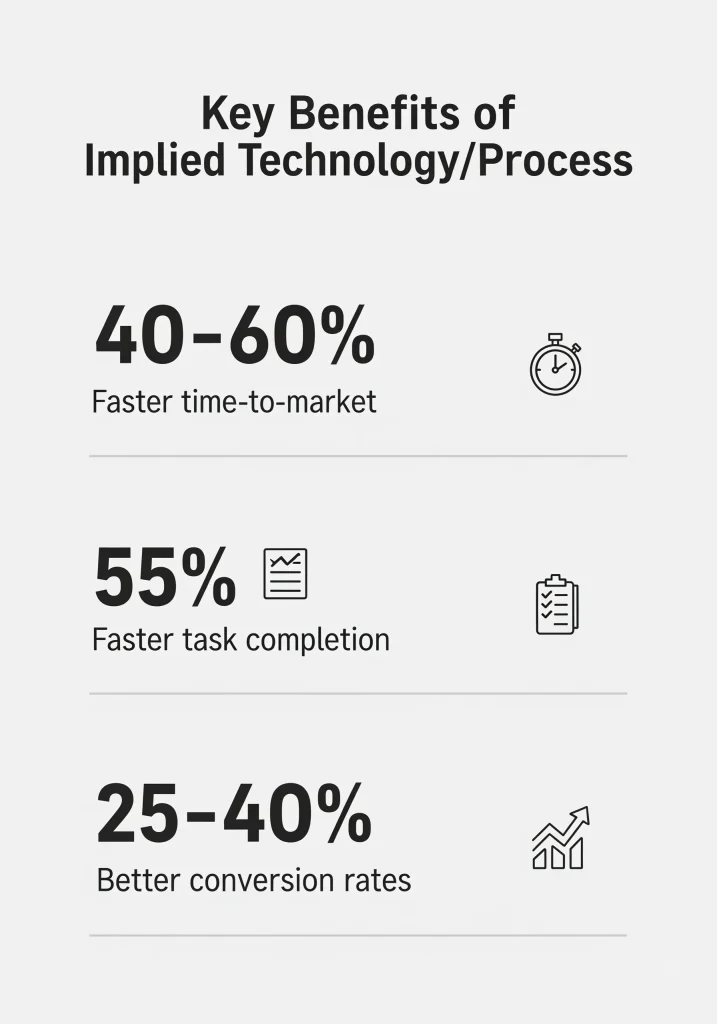

According to McKinsey’s latest research, companies using AI in early-stage product development reduce time-to-market by 40-60% compared to traditional approaches. This isn’t just about speed—it’s about creating products that become smarter with every interaction.

What Is AI MVP Development and Why It Matters

AI MVP development refers to building the simplest version of an intelligent product that uses machine learning algorithms or neural networks to solve a specific problem. Unlike traditional MVPs that deliver fixed functionality, AI-powered products continuously improve through data collection and algorithmic learning.

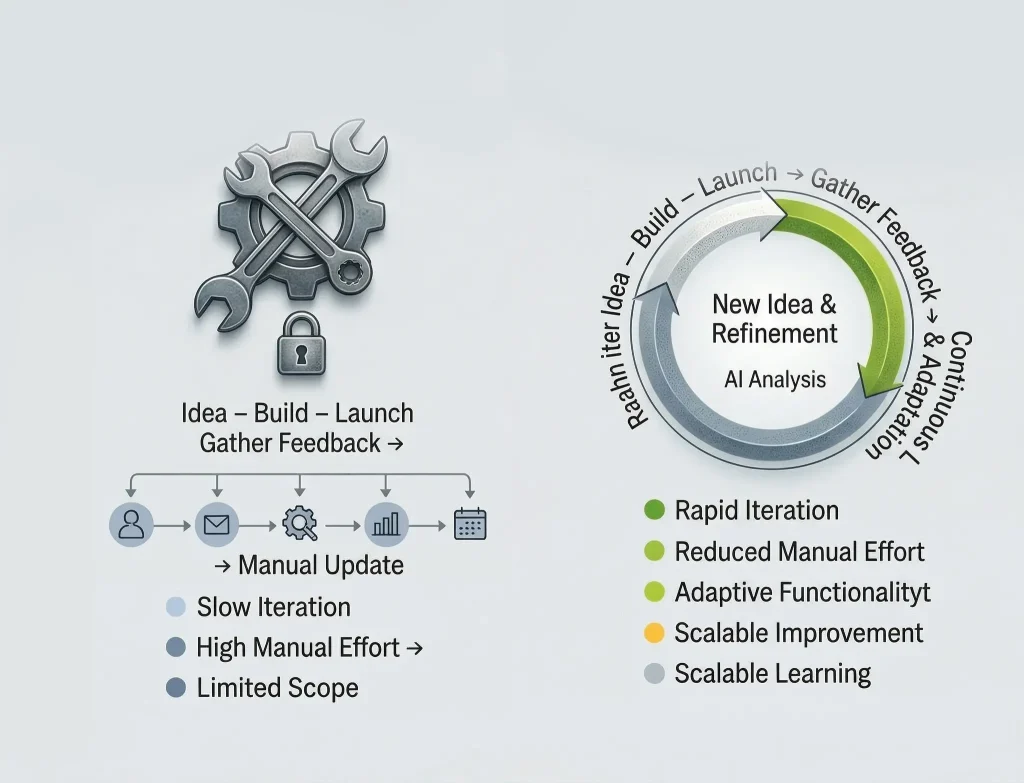

The core difference lies in evolution. A standard MVP requires manual updates based on user feedback—developers analyze comments, prioritize features, and push updates in development cycles. An AI MVP generates insights automatically by processing user behavior patterns and adjusting predictions without human intervention.

Consider a recommendation engine: instead of manually curating suggestions based on demographics, an AI system analyzes individual browsing patterns and real-time behavior to deliver personalized recommendations that improve conversion rates by 25-40%. This intelligent automation transforms how quickly you validate product-market fit.

Research from GitHub shows that developers using AI-powered tools like Copilot complete tasks 55% faster than those without. For startups building an AI MVP, this translates to significantly compressed iteration cycles and faster time-to-market.

Strategic Benefits Beyond Fast Deployment

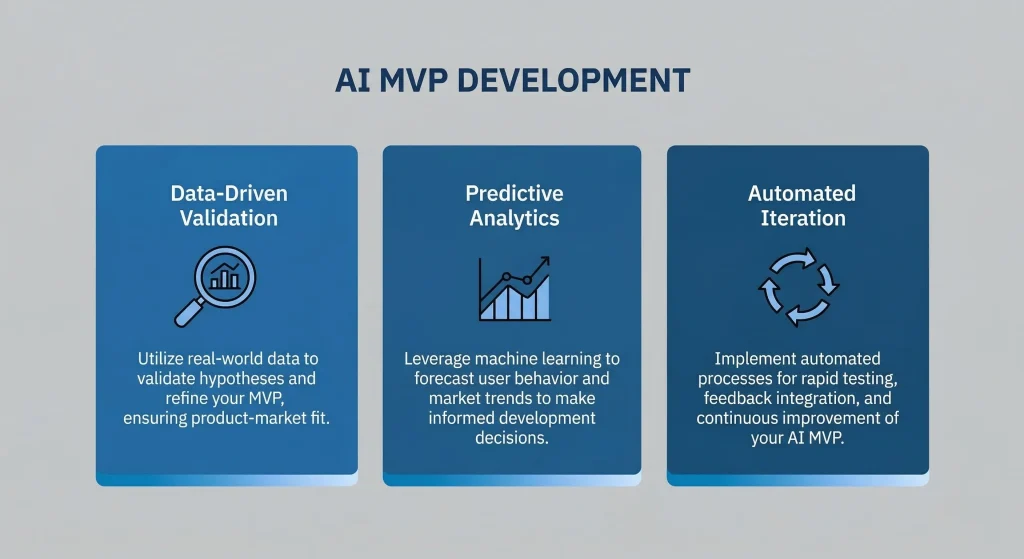

Data-Driven Validation That Eliminates Guesswork

Traditional product validation introduces weeks of delay between collecting feedback and implementing changes. AI MVP development captures and analyzes every user interaction as structured training data. Machine learning models assign probability scores to actions—clicks, purchases, or churn events—helping you understand not just what users do, but why.

The system automatically segments users into behavioral cohorts, revealing insights that would take analysts weeks to discover. For instance, users who engage with three specific features within their first session might have an 80% likelihood of becoming paying customers within 30 days.

Predictive Analytics for Proactive Decisions

Rather than reacting to churn after it happens, AI MVPs predict which customers will likely leave before they decide. Classification models analyze engagement patterns and usage frequency to generate early warnings, enabling targeted retention campaigns.

According to Gartner’s AI forecast, customer lifetime value prediction becomes operational from the earliest stages, allowing efficient budget allocation across user segments.

Automated Iteration Cycles

AI MVP development enables continuous learning loops without constant engineering resources. Models retrain automatically when new data reaches specific thresholds, incorporating recent patterns into algorithms. Your product improves daily without manual updates or code deployments.

AutoML systems test multiple algorithmic approaches simultaneously, optimizing parameters to maximize accuracy. What traditionally required weeks of data science experimentation now happens in hours, with systems validating improvements through holdout testing before deploying to production.

Step-by-Step Framework for Building Your AI MVP

Phase 1: Identify High-Impact Problems

Not every problem requires artificial intelligence. The first step involves identifying opportunities where machine learning creates measurable value that simpler approaches cannot deliver. Ask whether the problem generates continuous data streams—AI models need regular inputs to learn.

Evaluate if algorithmic decision-making performs better than rule-based logic. Problems involving pattern recognition, prediction, personalization, or optimization typically benefit from AI. However, if conditional statements solve the challenge effectively, save AI for more complex scenarios.

Ensure solutions produce immediate, visible impact. AI features taking weeks to demonstrate value struggle to drive engagement. The best AI MVPs show intelligence within the first session—accurate recommendations, relevant predictions, or automated insights that save time.

Phase 2: Define Precise Use Cases with Clear Metrics

Vague objectives like “use AI to improve the product” lead to wasted resources. Specify exactly what your system should accomplish: “Predict which users will upgrade within 7 days with 75% accuracy” or “Reduce support tickets by 30% through automated responses.”

Document inputs your model receives and outputs it generates. A churn prediction model might consume engagement data and billing information to produce probability scores. Establish baseline performance metrics before building anything—this creates benchmarks for evaluating whether AI adds value.

Phase 3: Collect Minimum Viable Data

Data quality determines model performance more than algorithmic sophistication. Audit existing data sources—user logs, transaction records, customer interactions. Assess whether datasets contain signals your model needs for accurate predictions.

For supervised learning, ensure proper labeling exists or can be generated efficiently. Deep learning models typically need thousands of examples, while simpler algorithms like random forests work with hundreds. Vista SysTech Limited specializes in building robust data pipelines supporting AI MVP development from deployment through scaling.

If sufficient data doesn’t exist, implement tracking systems immediately. Add event logging for key actions, set up A/B testing frameworks, or create collection workflows gathering information during normal usage.

Phase 4: Build a Functional Prototype

Start with pre-trained models or well-documented libraries solving similar problems. Hugging Face provides state-of-the-art natural language processing models, while OpenAI offers powerful APIs for text generation and analysis.

Fine-tuning existing models on your dataset reduces development time dramatically. This approach often achieves 80-90% of custom model performance with 20% of the effort. Focus exclusively on core AI capability during prototyping—skip advanced features and complex UI.

Phase 5: Create a Minimal Interface for Testing

Your AI model needs a simple interface allowing real users to interact with predictions. A basic web application built with Flask or FastAPI for backend and React for frontend provides sufficient functionality.

Display outputs in clear formats without technical jargon. If predicting churn probability, show simple percentages or risk levels rather than raw scores. Build feedback mechanisms capturing user reactions—thumb up/down buttons or accuracy ratings help understand where models perform well.

Phase 6: Deploy to Limited Users

Select 10-30 users from your target audience who face the problem daily. Monitor both technical performance and user behavior closely. Track prediction latency—responses should feel instantaneous, ideally under 200 milliseconds.

Conduct structured interviews capturing qualitative insights that metrics miss. Focus particularly on understanding when users trust predictions versus when they question them. Trust calibration determines whether users adopt AI features long-term.

Phase 7: Iterate and Prepare for Scale

Use deployment data to retrain models with examples reflecting actual usage patterns. Real-world data often differs significantly from initial training sets. Optimize inference performance by reducing model size without sacrificing accuracy significantly.

Implement continuous monitoring dashboards tracking performance metrics automatically. Establish A/B testing infrastructure allowing safe comparison of model versions in production.

Essential Technologies and Tools

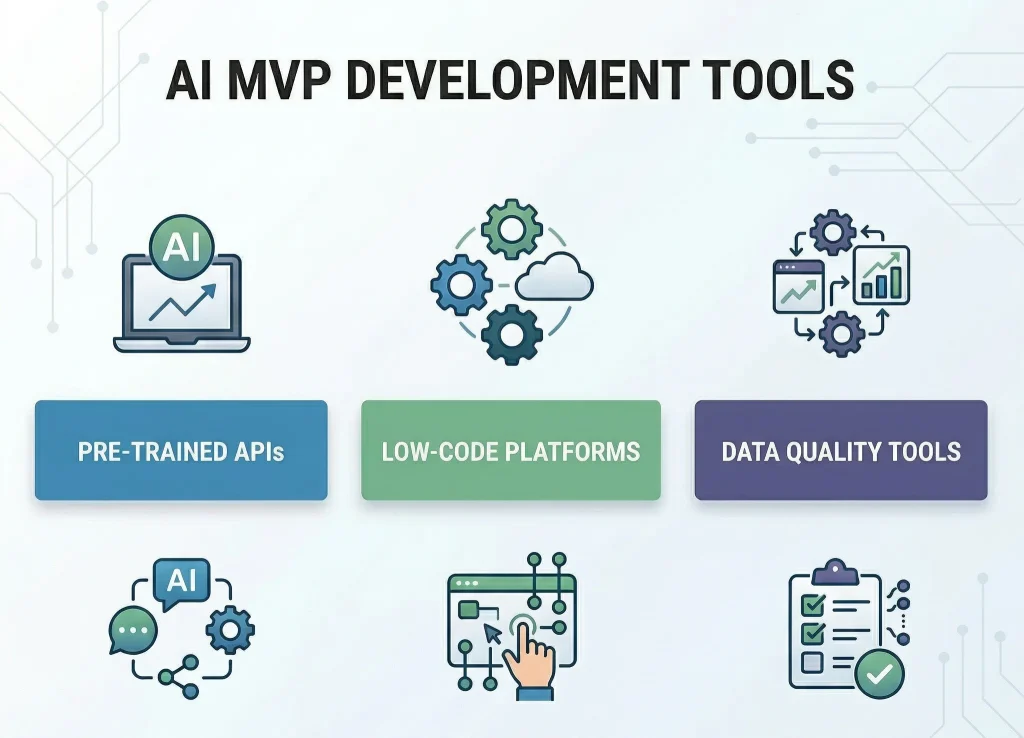

Pre-Trained Models and API Services

Modern AI MVP development leverages existing models rather than training from scratch. OpenAI’s API documentation provides powerful natural language capabilities through simple calls. Google’s Vertex AI offers pre-trained models for computer vision and structured data analysis.

AWS SageMaker includes dozens of pre-trained models for common tasks like text classification and image recognition. These models achieve production-quality results without requiring machine learning expertise.

Low-Code Platforms

For teams without dedicated data scientists, low-code platforms democratize AI development. Google AutoML and Azure Machine Learning Studio provide visual workflows for training custom models, handling complex technical details while exposing control for customization.

Data Quality Tools

Labelbox provides collaborative interfaces for annotating images, text, and audio with quality control features ensuring consistency. Scale AI offers managed labeling services with human-in-the-loop verification for teams without internal resources.

Cost Analysis for AI MVP Development

Budget planning requires understanding cost drivers across multiple categories. Total investment typically ranges from $25,000 to $180,000 depending on technical complexity and timeline constraints.

Development Team Expenses

The largest cost involves engineering talent. AI engineers command $100-$250 per hour depending on expertise and location. A typical AI MVP requires 2-4 months with teams of 3-5 specialists including machine learning engineers, full-stack developers, and product managers.

In-house hiring proves expensive with senior ML engineers earning $150,000-$300,000 annually plus benefits. Partnering with specialized firms like Vista SysTech Limited provides access to expert teams without long-term commitments, reducing risk while maintaining flexibility.

Infrastructure and API Costs

Cloud computing expenses vary based on model complexity and user scale. Early-stage MVPs with limited users might spend $500-$2,000 monthly on cloud services. API service fees apply when using pre-trained models—budget $0.002-$0.02 per call depending on service.

Data Acquisition

If quality training data doesn’t exist, acquisition costs can exceed development expenses. Commercial datasets range from $1,000 for specialized sets to $50,000+ for comprehensive industry data. Manual labeling services cost $0.10-$5.00 per item depending on complexity.

Cost Optimization Strategies

Starting with pre-trained models and API services minimizes initial investment. Validate core concepts at $25,000-$50,000 before committing to custom development costing $100,000+. Focus exclusively on one high-value feature rather than multiple capabilities—each additional component increases complexity exponentially.

Critical Success Metrics

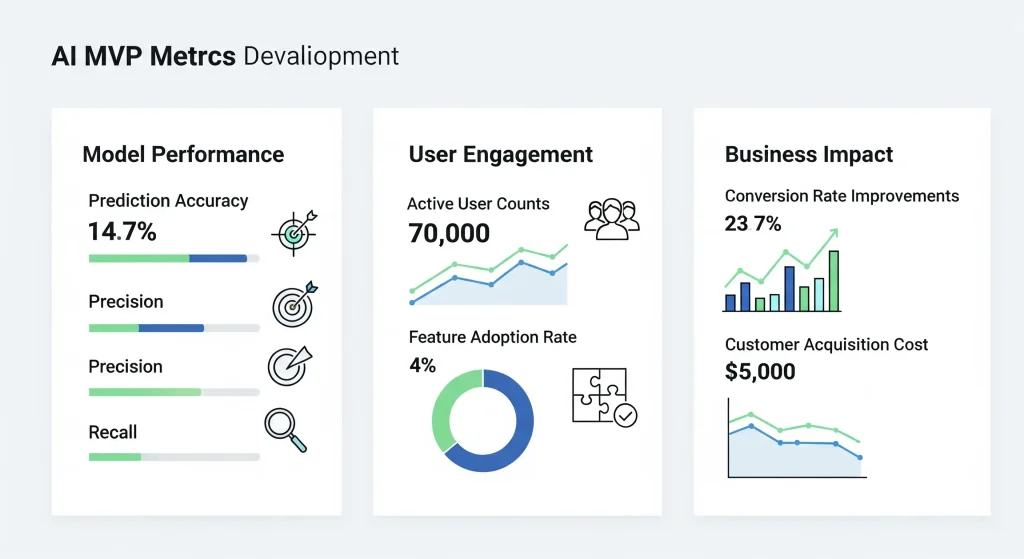

Model Performance Indicators

Prediction accuracy measures how often models produce correct outputs. For classification, this provides straightforward benchmarks. Precision and recall offer deeper insight for imbalanced datasets—high precision means most positive predictions are correct, while high recall indicates models catch most positive cases.

User Engagement Metrics

Daily and monthly active user counts reveal whether AI features drive engagement. Compare usage rates between users with AI access versus control groups. Feature adoption rate shows what percentage actually interact with capabilities—low adoption despite good performance might indicate poor positioning or UX friction.

Business Impact Metrics

Conversion rate improvements demonstrate AI’s business value directly. If recommendations increase purchase rates from 2.1% to 3.4%, you’ve generated measurable revenue lift. Customer acquisition cost changes reveal whether AI features improve marketing efficiency through intelligent targeting. According to MIT Sloan’s research on AI adoption, companies successfully implementing AI see 20-30% improvements in operational efficiency within the first year.

Common Pitfalls to Avoid

Overcomplicating Initial Solutions

Many teams build sophisticated AI systems when simpler approaches would validate hypotheses equally well. Begin with the simplest model that could possibly work. For many problems, logistic regression or random forests achieve 85-90% of neural network performance while requiring 70% less development time.

Insufficient Data Quality

Poor data quality represents the most common reason AI MVPs underperform. Models trained on noisy or inconsistent data produce unreliable predictions regardless of algorithmic sophistication. Invest in data quality before model complexity.

Ignoring Model Interpretability

Users resist AI recommendations they don’t understand or trust. Black-box models providing predictions without explanation create frustration. Choose interpretable models when possible—decision trees, linear models, and rule-based systems offer transparency building user confidence.

Future Trends in AI MVP Development

Democratization Through Better Tools

AI development barriers continue falling as tools become more accessible. Natural language interfaces now allow business users to build predictive models by describing problems in plain English. AutoML platforms achieve near-expert performance automatically, testing hundreds of architectures simultaneously.

Edge AI and Real-Time Intelligence

Processing AI workloads on devices rather than cloud servers enables instant responses and reduces infrastructure costs. Edge AI becomes particularly valuable for mobile applications where network latency impacts user experience. Real-time image analysis and voice processing happen locally without round-trip delays.

Multimodal Capabilities

Modern AI systems increasingly combine multiple input types—text, images, audio—in unified models. Multimodal understanding enables richer experiences and sophisticated problem-solving. MVPs can process documents with embedded images or analyze video content for insights.

Start Building Your AI MVP Today

The competitive advantages of AI MVP development compound over time. Every month spent in traditional development cycles represents missed opportunities to gather behavioral data and deliver personalized experiences. Early movers establish data advantages that later entrants struggle to overcome.

The technologies, tools, and expertise required are more accessible than ever. Pre-trained models eliminate months of algorithm development. Cloud infrastructure scales automatically from prototype to production. Development partners provide end-to-end support accelerating time-to-market while controlling costs.

Your intelligent product journey starts with identifying one high-value problem where AI creates measurable improvement. Focus on that capability, build a minimal version, deploy to real users, and iterate based on results. This disciplined approach validates whether AI adds genuine value before overinvesting in comprehensive systems.

The question isn’t whether AI will transform your product category—it already is. The question is whether you’ll lead that transformation or scramble to catch up. AI MVP development provides the fastest path to understanding how machine learning creates value in your specific context with manageable risk.

Ready to explore how AI can accelerate your product development? Contact Vista SysTech Limited today to discuss your AI MVP requirements and receive a detailed project proposal tailored to your objectives, timeline, and budget constraints.